You Might Be Invisible to ChatGPT (And Here’s How to Fix it)

You Might Be Invisible to AI... Here Are 3 Website Fixes That Change That

Your website looks great. It loads fast. The photos are professional. Your bio is current and your listings are all there.

And AI tools like ChatGPT still can't tell who you are.

Here's the issue: your website was built for human visitors. The layout is designed for human eyes. The navigation is designed for human behavior. But when AI tools crawl your website to decide whether to recommend you, they don't experience it the way a person does. They're looking for something entirely different… and if it's not there, they either get it wrong, get it incomplete, or skip you altogether.

This isn't a criticism of whoever built your site. It's just that web design has always been built around the human experience. AI needs something different.

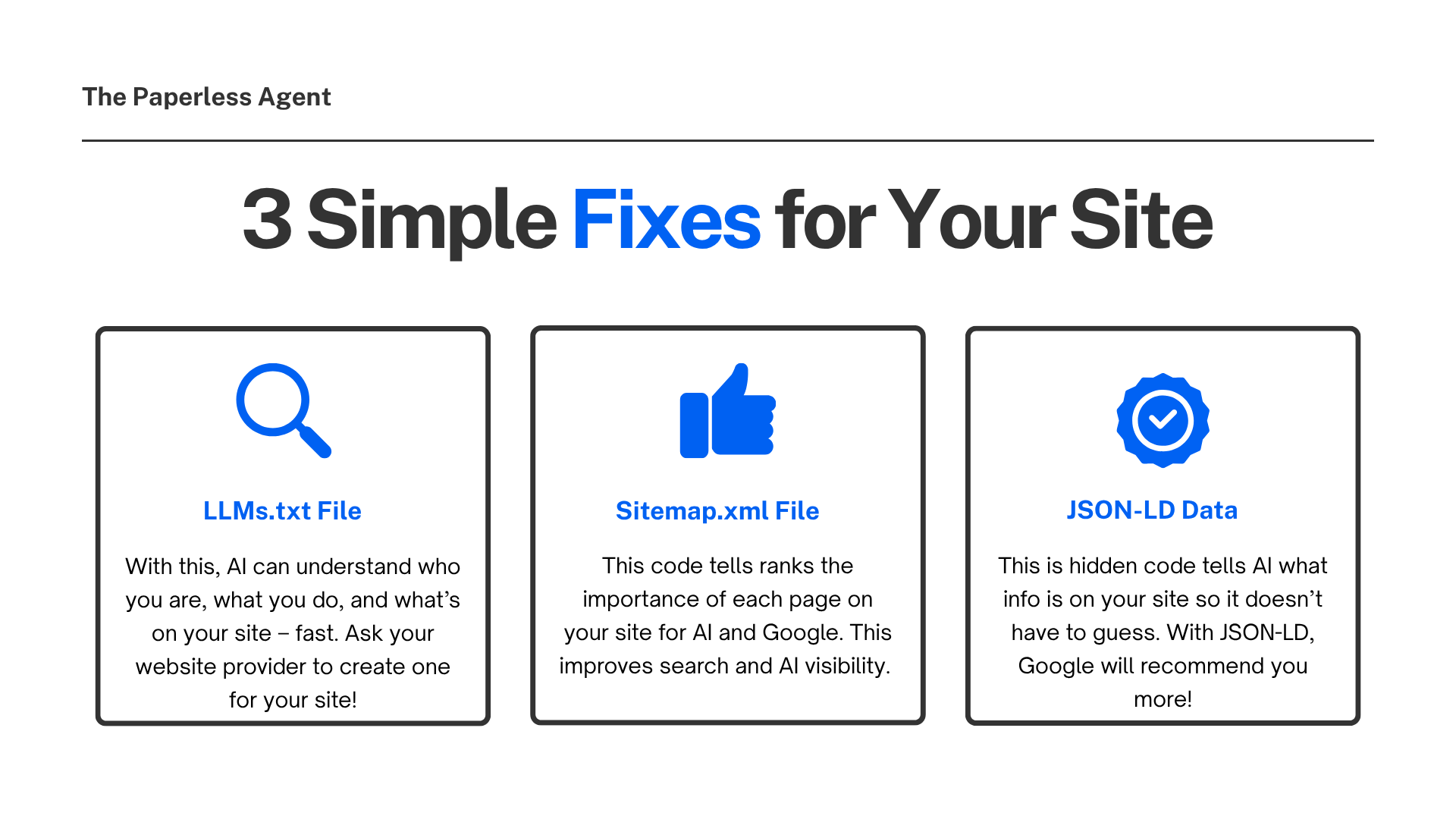

The good news is that fixing this isn't complicated. There are three specific additions you can make to your website that dramatically improve how AI tools read, understand, and ultimately recommend you. Two of them you may already have in place without knowing it. One requires a bit of help from your website provider, but it's worth every minute.

Let's walk through all three.

Why AI Reads Your Website Differently Than a Human Does

When a potential client opens ChatGPT and asks "who should I hire to sell my home in [your city]?" the AI doesn't ask around. It reads the internet. Specifically, it sends bots to crawl websites — yours included — and then synthesizes what it finds into a recommendation.

The problem is that those bots work a lot like Google's crawlers: they read everything, try to piece it together, and often get it wrong or miss key details. Why? Because your website is full of navigation menus, sidebars, footer links, cookie banners, JavaScript elements, and marketing copy that means something to a human eye but creates noise for a machine trying to understand who you are and what you do.

Without clear guidance, the AI takes its best guess. And its best guess is often incomplete, generic, or outdated.

Early adopters who have structured their websites for AI readability report significantly higher AI referral traffic — with conversion rates from AI-referred visitors measurably higher than traditional search. The opportunity is real. And the fixes are simpler than most agents expect.

Fix #1: The LLMs.txt File: A Cheat Sheet for AI Bots

What it is: A plain text file that lives on your website specifically so AI tools can quickly understand who you are, what you do, and what's on your site.

Think of it as handing the AI a one-page summary before it has to read through your entire website. Instead of forcing the bot to sift through navigation links, blog posts, and IDX listings to figure out what your site is about, the LLMs.txt file tells it directly.

Think of it as a counterpart to robots.txt, but for AI understanding rather than crawl control. While robots.txt tells search engines what they can and cannot crawl, the LLMs.txt file helps AI systems interpret your site's structure, intent, and authority.

A well-structured LLMs.txt file for a real estate agent typically includes:

- Who you are — Your name, your role, your brokerage

- What you do — The markets you serve, your specializations

- Key contact information — Phone, email, your primary website URL

- Key pages — Links to your about page, listings, neighborhood guides, and contact page

- Recent content — Links to recent blog posts or market updates

- FAQ pages — Where clients can find answers to common questions

That's it. You don't need an essay. A clean, structured summary is enough for an AI bot to walk away with a firm understanding of who you are and what you offer.

The LLMs.txt standard is relatively new. It was proposed in 2024 and major companies including Anthropic, Cloudflare, and Stripe have already implemented it. It's still an emerging standard, and not every AI platform has officially confirmed it reads these files. But the practical upside is clear and the downside is essentially zero. The file sits harmlessly on your site if it goes unused, and positions you well if and when broader adoption follows.

The Yoast SEO plugin for WordPress now auto-generates LLMs.txt files, prioritizing cornerstone content and recent posts, updating weekly. If your site runs on WordPress and you use Yoast, you may already be partway there.

Ask your website provider whether an LLMs.txt file exists on your site. If it doesn't, they can create one. It's a simple text file, not a code project. If you want to draft the content yourself, use the categories above as your outline and keep each section brief and clear.

Fix #2: The Sitemap.xml File: The Table of Contents for Your Website

What it is: A file that tells Google and AI crawlers exactly which pages exist on your website, how important each one is, and when they were last updated.

If the LLMs.txt file is a summary of who you are, the sitemap is a map of every room in your house. It ensures nothing gets missed or ignored when a crawler comes through.

When an AI tool crawls your website, it needs to know where to look. Without a sitemap, it might miss your neighborhood pages, skip your testimonials page, or never find the FAQ section you spent hours writing. The sitemap eliminates that guesswork.

Here's what a sitemap does in practice: it lists every URL on your site in a structured format, tells the crawler which pages are most important (priority), and notes when each page was last updated. That last detail matters: AI tools and Google both give preference to fresh, current content.

The good news: Most modern website platforms generate a sitemap automatically. If your site was built on WordPress, Squarespace, Wix, or any major real estate website platform, there is a very good chance your sitemap already exists. You can check by typing your website URL followed by /sitemap.xml into a browser. If a page of structured data appears, you have one.

If you use WordPress, the Yoast SEO plugin handles sitemap generation automatically and keeps it updated as you add new content.

The sitemap.xml file isn't just for AI. It's the same file Google has used for years to sift through and index websites. Improving it helps both your traditional search visibility and your AI visibility at the same time — one fix, two benefits.

Check whether your sitemap exists using the method above. If it doesn't, ask your website provider to create one or install a plugin like Yoast that handles it automatically. If it does exist, make sure it's being updated regularly as you add new pages and content.

Fix #3: JSON-LD Structured Data: Labels That Tell AI Exactly What It's Looking At

What it is: A piece of hidden code added to your website pages that tells AI tools and search engines exactly what type of information is on each page without any guessing.

This is the most powerful of the three fixes, and the one most real estate agents haven't touched.

When you write on your homepage that you're "a top-producing Realtor serving the Austin metro area specializing in luxury homes," a human reader understands exactly what that means. An AI bot reading the same page sees text — but without structured labels, it has to guess at the meaning. Is this a business? A person? A listing? What city? What's the phone number?

When you add schema markup, search engines and AI tools see a structured entity — a specific real estate agent with a name, location, phone number, service areas, and credentials — rather than just a page of text.

JSON-LD removes all the guessing. Instead of hoping the AI correctly interprets what it reads, you're giving it direct, unambiguous labels.

- Your full name and professional title

- Your brokerage name and affiliation

- Your phone number and email

- The geographic areas you serve

- Your professional credentials and licenses

- Your website URL and social profiles

- Reviews and ratings associated with your name

When your agent profile is properly marked up with JSON-LD, AI tools can confidently highlight your information and reference your business in answers because they're not guessing anymore. They're reading a label.

Google strongly recommends JSON-LD format for schema markup because it separates structured data from HTML content, making it easier to maintain and less likely to interfere with your website's visual presentation. It also improves your eligibility for Google's "rich results" — the enhanced search listings that show additional information like star ratings, business hours, and contact details directly in search results.

One fix. Improved AI visibility. Better traditional search visibility. Stronger Google Business presence. It all connects.

Is it technical?

Somewhat — yes. JSON-LD is code that gets added to the head section of your website pages, and a small error in the format can break it entirely. This is not a fix to attempt by copying code blindly from the internet. Your website provider can implement it cleanly, or you can use a free tool like Google's Rich Results Test to validate that it's working correctly once it's in place.

There are free schema generators that allow you to enter your business details and produce ready-to-paste code with no technical skills required, which you can then hand to your website provider for implementation.

For WordPress users, plugins like Yoast SEO and Rank Math handle much of this automatically, generating the appropriate schema based on the content of each page.

How These Three Fixes Work Together

Each of these additions solves a different part of the same problem — your website being built for humans rather than AI.

The LLMs.txt file gives AI a quick, clear summary of who you are and what your site contains before it ever digs deeper.

The sitemap.xml ensures that when AI does dig deeper, it finds every page, not just the ones it happens to stumble onto.

The JSON-LD structured data makes sure that what it finds on those pages is interpreted correctly — with clean, accurate labels rather than guesswork.

Together, they transform your website from something AI has to work to understand into something it can read confidently and recommend accurately. None of them change your website visually. Your visitors will never see any of this. But the AI bots crawling your site in the background, the ones deciding whether to mention your name the next time a prospective seller asks ChatGPT who to hire, will notice immediately.

What to Do This Week

You don't have to tackle all three at once. Here's a prioritized starting point:

Step 1: Check for your sitemap. Go to yourwebsite.com/sitemap.xml. If it loads, you have one. If not, contact your website provider.

Step 2: Ask your website provider or check your SEO plugin whether a LLMs.txt file exists on your site. If it doesn't, ask them to create one using the framework outlined in the blog.

Step 3: Discuss JSON-LD implementation with your website provider. Show them this post. Ask specifically about adding RealEstateAgent or LocalBusiness schema markup to your homepage and about page. This is the most impactful of the three and worth prioritizing once the first two are in place.

This is not a large project. For a web developer, these are hours of work, not weeks. And the agents who take care of this now — while most of their competition hasn't started — will have a meaningful head start as AI search continues to grow.

Frequently Asked Questions

Q: Do I need all three of these fixes, or just one? All three work together and address different parts of the same problem, but they're not equally difficult to implement. The sitemap is likely already in place. The LLMs.txt file is simple to create. JSON-LD is the most technical but also the most impactful. If you had to pick one to start with, start with JSON-LD — it does the most work for both AI visibility and traditional search.

Q: Will my website provider know what these are? Most experienced web developers will recognize all three. JSON-LD and sitemap.xml have been standard SEO practice for years. LLMs.txt is newer, so it's worth sharing this post with your provider as context. If they're unfamiliar, it may be worth consulting someone with experience in AI search optimization.

Q: I use a template website provided by my brokerage. Can I still make these changes? It depends on how much control you have over your site. Many brokerage-provided sites are templated and limit customization. In that case, your best path is to either request these additions from your broker's tech team or consider building your own website where you have full control over the backend.

Q: How do I know if my JSON-LD is set up correctly? Use Google's free Rich Results Test at search.google.com/test/rich-results. Paste your website URL and it will scan the page and tell you whether structured data is present, what type it is, and whether there are any errors. You can also use Schema.org's validator at validator.schema.org for a more detailed check.

Q: Does having these fixes guarantee ChatGPT will recommend me? No — and be skeptical of anyone who claims otherwise. These fixes improve your website's readability and clarity for AI tools, which increases the likelihood of being understood and recommended. But AI recommendations also depend on your overall digital footprint — your reviews, your bios on other platforms, your Google Business Profile, and the quality of your content. These three fixes are one important layer of a broader strategy.

Q: How often do I need to update these files? The sitemap updates automatically if you use an SEO plugin. The LLMs.txt file should be reviewed and updated whenever significant changes happen to your website — new service areas, new contact information, new key pages. JSON-LD should be updated whenever the information it references changes, like if you move offices or add a new specialization. An annual review of all three is a reasonable minimum.

Q: Is the LLMs.txt file officially supported by ChatGPT and Google? Not officially confirmed — yet. The LLMs.txt standard is still emerging, and no major AI platform has publicly stated they use it as a ranking signal. That said, major companies including Anthropic and Cloudflare have already implemented it on their own sites, and Google has been observed crawling LLMs.txt files. The implementation cost is low, the downside is zero, and the potential upside grows as the standard matures.